In the previous articles in this series, we talked about the tsunami of digital transformation, the three waves impacting our clinics, the manual for evaluating AI tools, the automation toolkit, and the Hero’s Work. Now I want to address something that is happening on a massive and silent scale in thousands of veterinary clinics worldwide: professionals are beginning to use language models like ChatGPT, Claude, or Gemini in their daily work. And the vast majority are using them wrong.

I do not say this as criticism. I say it with the same urgency I would use to say that a poorly administered drug does not just fail to cure — it can cause harm. Language models are extraordinarily powerful tools, but like any powerful tool, they need a method, judgment, and clear boundaries to be truly useful rather than becoming a source of errors disguised as efficiency.

The 90% mistake

There is a statistic I find revealing that perfectly summarizes the problem: it is estimated that 90% of people who use language models use them incorrectly. What does “incorrectly” mean? It means they treat them as if they were a search engine. Like a sophisticated Google where you ask short questions and expect factual answers.

“What dose of amoxicillin for a 4-kilogram cat?”

This is the typical question a veterinarian would ask an LLM. And the LLM will give a response that sounds perfect, is well-written, has the right format, and… may be completely wrong. Or partially wrong, which is even worse, because a response that is 80% correct creates a false sense of security that can lead to erroneous clinical decisions.

The problem is not with the tool. It is with how we use it. A language model is not a search engine that retrieves data from a verified database. It is a language generator that predicts the most probable sequence of words given a particular input. It does not “know” anything in the human sense of the word. It predicts. And the quality of that prediction depends directly on the quality and specificity of what we provide it.

An LLM is not Google: understanding the difference changes everything

When you search for something on Google, the search engine returns web pages that contain the information you asked for. The information exists on those pages, it was published by someone, and you can verify the source. Google is an intermediary that connects you with existing information.

An LLM works in a radically different way. It does not search for information — it generates it. It constructs responses word by word based on the statistical patterns it learned during training. This gives it an astonishing ability to draft, synthesize, structure, and transform information. But it also gives it an inherent tendency toward hallucinations: generating content that sounds perfectly coherent and professional but does not correspond to reality.

Does this mean we should not use them? Not at all. It means we should use them for what they are extraordinarily good at, and stop asking them for what they cannot reliably give us.

An LLM is brilliant for drafting a personalized follow-up email for a client after a complex consultation. For turning your mental notes into a structured internal protocol. For generating ideas for your social media marketing. For preparing team meeting minutes from disorganized notes. For writing an empathetic and professional response to a complaint on Google Reviews. For creating the script for a difficult call to a pet owner.

All of that is management, communication, operations. And there, LLMs are absolutely transformative when used with method.

The missing method: from improvisation to system

The key is in that last phrase: “when used with method.” Because the difference between a veterinarian who gets extraordinary results from an LLM and one who gets generic, unhelpful responses is not in the tool, nor in the model they use, nor in whether they pay for the premium version. It is in how they formulate their instructions.

There is an analogy I particularly like because it comes naturally to us as veterinarians: using an LLM well is exactly like taking a good history. When you take a patient history, you do not tell the owner “tell me something about the dog.” You ask with structure: how long the symptoms have been present, what they eat, whether there have been recent changes, whether they are on medication, whether they have traveled. You provide a framework. You guide them to give you the information you need in the order you need it.

With an LLM, it works exactly the same way. If you give it a vague instruction, you get a vague response. If you provide detailed context, a clear role, a specific task, and a defined output format, you get a result that can be genuinely useful and directly applicable to your work.

This structured approach is what separates the 10% who truly leverage these tools from the 90% who use them as an expensive Google. It does not require programming knowledge, it does not require being an IT specialist or understanding algorithms. It requires learning to communicate effectively with a tool that, when properly directed, can multiply your operational productivity in ways that were unthinkable three years ago.

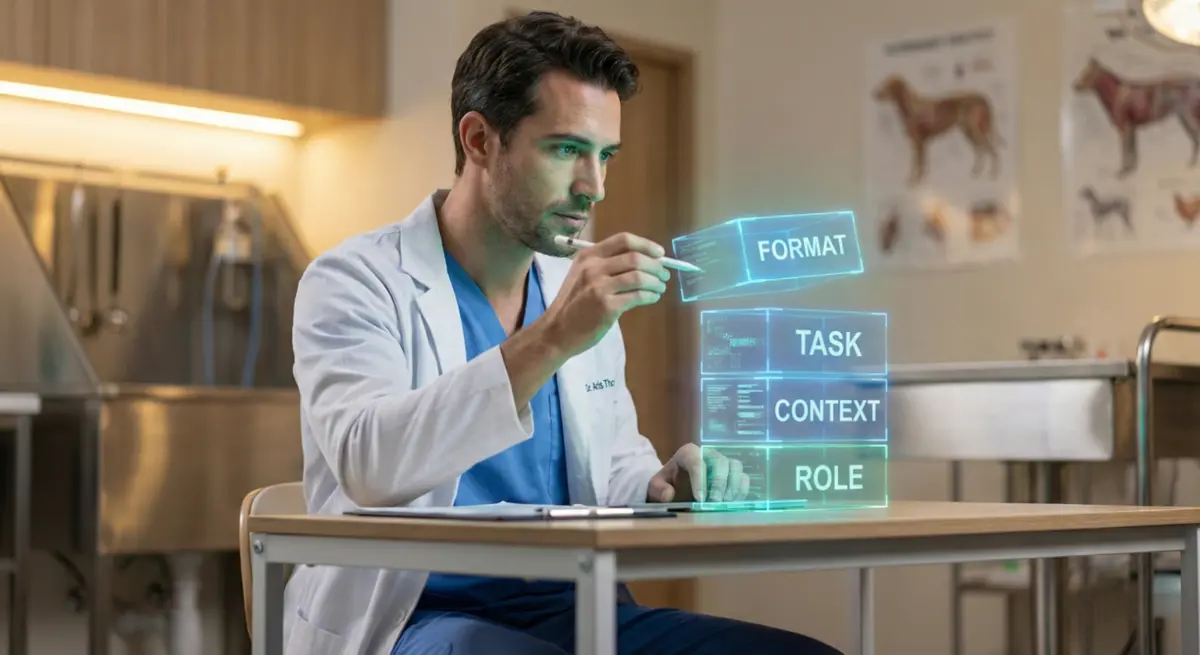

At KyberVet Academy, we have developed a complete course, “AI for Veterinarians,” designed specifically for veterinary professionals who want to learn to master LLMs applied to clinic management. It is not a technical or theoretical course. It consists of 27 practical lessons with real-world veterinary examples, organized into 7 modules that range from understanding what an LLM really is to mastering advanced prompting techniques, covering client communication, marketing, team management, and internal protocols. All built around our own method called RCTF: Role, Context, Task, and Format. A simple, memorable structure that is directly applicable from day one.

The red line: when an LLM is not enough

But there is something I need to be absolutely clear about, because it is a matter of professional responsibility: generic LLMs have a very specific limit when it comes to clinical practice.

A generic language model can help you write a brilliant email. It can generate a flawless internal protocol. It can transform your client communication. But when we are talking about clinical decisions, differential diagnoses, laboratory result interpretation, or drug dosages for a specific patient, things change radically.

Why? For three fundamental reasons that every veterinary professional must understand.

The first is reliability. A generic LLM has not been clinically validated for veterinary medicine. It has not undergone any process verifying its clinical responses against a reference standard. It has no published sensitivity or specificity metrics. You do not know how often it is right and how often it is wrong on a differential diagnosis — and in medicine, that is unacceptable.

The second is currency. Language models have a training cutoff date. The clinical information they handle may be outdated. Protocols change, guidelines are updated, drugs are withdrawn or reformulated. A generic LLM has no way of knowing whether the dose it is recommending is still current or was revised six months ago.

The third, and perhaps the most dangerous, is false confidence. An LLM will give you a clinical response with the same confident tone and the same professional structure whether the answer is correct or a complete hallucination. It does not warn you when it does not know. It does not reliably show uncertainty. And that, in a clinical context where decisions have direct consequences for the health of a living being, is a risk we cannot accept.

Two worlds, two tools

This distinction leads us to a principle I consider fundamental for any veterinarian who wants to integrate AI into their practice responsibly: you need different tools for different problems.

For management, communication, marketing, operations, internal protocols, and everything that surrounds clinical practice without being clinical practice itself: generic LLMs, used well with an adequate method, are extraordinary. These are the tools you learn to master in a course like the one we designed at KyberVet Academy.

For clinical practice, result interpretation, diagnostic support, and decisions that directly affect your patients: you need tools specifically designed, trained, and validated for veterinary medicine. Tools like LAIKA, our clinical copilot, which was built from the ground up with verified veterinary data, has published performance metrics, and is designed to function as that always-available expert colleague we discussed in previous articles in this series.

LAIKA is not a generic LLM with a coat of veterinary paint on top. It is a clinical tool that understands your patient’s context, works with their history, integrates laboratory results, reasons through differential diagnoses with an updated knowledge base supervised by veterinary professionals. And it also takes care of documenting your consultation automatically through intelligent scribing, freeing you from the administrative burden that steals so much of your time every day.

The combination of both tools is what truly turns a veterinarian into an Augmented Veterinarian: LLMs mastered with method for everything surrounding the clinic, and a specialized, validated tool for everything that happens inside the consultation room.

The veterinarian of the future is not the one who uses the most tools. It is the one who knows which to use at each moment. LLMs to manage. Specific tools to diagnose. Your own clinical judgment to decide. Always.

Responsibility starts with training

There is one final point I want to address that I consider of paramount importance: responsibility in the use of AI.

Every time a veterinary professional enters clinical case data into a generic LLM like ChatGPT, that data travels to external servers. Is it anonymized? Is it protected? How is it stored? Can it be used to train future models? These are questions that few professionals ask before pasting a complete clinical history into a chatbot window.

The privacy of clinical data is not just a legal matter (which it is, especially under GDPR in Europe). It is a matter of professional trust. Our clients trust that their pets’ information is protected, and that trust cannot be broken by careless use of tools we do not fully control.

Learning to use LLMs responsibly also means learning what type of information is safe to share and what is not, how to anonymize data before entering it, which models offer more robust privacy guarantees, and when it is preferable to use a specialized tool with clear data processing agreements and verifiable regulatory compliance.

All of this is covered in the security and privacy module of the KyberVet Academy course, because we are convinced that there is no point in teaching people to use a powerful tool without teaching them to use it safely. It would be like teaching surgery without teaching asepsis.

Training as a competitive advantage

We are at a pivotal moment. Veterinary professionals who invest now in learning to master these tools with judgment, method, and responsibility will have an enormous advantage over those who keep improvising or — worse still — ignoring that this transformation is happening.

It is not about becoming an IT specialist. It is about adding one more professional competency to our arsenal, just as we once learned to use digital ultrasound machines, automated biochemistry analyzers, or practice management software. It is one more tool. Extraordinarily powerful, yes. But a tool that needs a trained professional behind it to be truly useful.

90% use it wrong. You have the opportunity to be in the 10% who master it. And that, in an increasingly competitive market with increasingly demanding clients, makes a real, tangible difference in the quality of your service and the profitability of your clinic.

The best time to get trained was yesterday. The second best time is today. AI is not going to wait for us to be ready.

This article is part of the “AI & Veterinary Medicine” series by KyberVet. If you want to take the step and learn to master LLMs with method, visit KyberVet Academy and discover our “AI for Veterinarians” course.