In the previous articles in this series, we analyzed why the client is the true driver of digital transformation in veterinary medicine and what the three major waves impacting our clinics look like. Now it is time to get practical. Because understanding that change is here is one thing, and knowing how to act on it with judgment is quite another.

The reality is that, faced with the avalanche of tools, promises, and acronyms surrounding us, it is perfectly normal to feel what we might call innovation overwhelm. Every week a new AI tool appears promising to revolutionize veterinary medicine. Every conference dedicates sessions to the topic. Every vendor has added the words “artificial intelligence” to their sales pitch.

Faced with this saturation, it is easy to fall into one of two equally dangerous traps: analysis paralysis (doing nothing for fear of making a mistake, waiting for everything to become clear) or impulse buying (acquiring tools without a clear strategy, simply out of pressure not to fall behind). Both are understandable reactions, but neither is the right answer.

To avoid both traps, we need a system. A solid mental framework that allows us to understand what AI is, what we can realistically expect from it, and how to evaluate any tool we are offered with professional judgment.

Debunking the myth: what AI is (and what it is not)

Let us start by dismantling a fundamental misconception that contaminates most conversations on this topic. When people talk about Artificial Intelligence, many imagine a digital mind that thinks like us. An artificial consciousness that reasons, reflects, has intuitions, and makes decisions the way a human being would. Movies and science fiction have contributed enormously to this perception.

Nothing could be further from the current reality. We humans reason in an extraordinarily complex way. We use experience accumulated over years, clinical intuition forged through thousands of cases, emotional context, empathy with the patient and their owner, and a creative ability to integrate information from very diverse sources in ways that are genuinely original and adaptive.

Today’s AI, however sophisticated its responses may seem, works in a fundamentally different way. It is an extraordinarily powerful machine for recognizing statistical patterns. It has been trained on massive amounts of information — including every published veterinary textbook, every indexed scientific article, millions of conversations and clinical records — and its job consists, in essence, of predicting what the next word, the next data point, or the next most probable association is, given a particular input.

Let us use a concrete example. AI does not understand what pancreatitis is the way you understand it when you palpate a patient’s abdomen, observe their prayer position, and mentally connect with previous cases you have treated. But it has seen the word “pancreatitis” associated with vomiting, abdominal pain, elevated lipase, compatible ultrasound images, and certain patient profiles millions of times. And that is why it can make predictions and associations that turn out to be astonishingly accurate.

Does this make it less useful? Not at all. But it does radically change two things: how we should use it and what we can expect from it. It is not a colleague who thinks. It is a prodigiously powerful tool that amplifies our capabilities. And that distinction is crucial for using it well.

The goal is not for AI to replace us. The goal is for us to become Augmented Veterinarians: our clinical judgment and experience, combined with AI’s ability to analyze data at a scale we could never reach on our own.

The recipe for a good AI tool

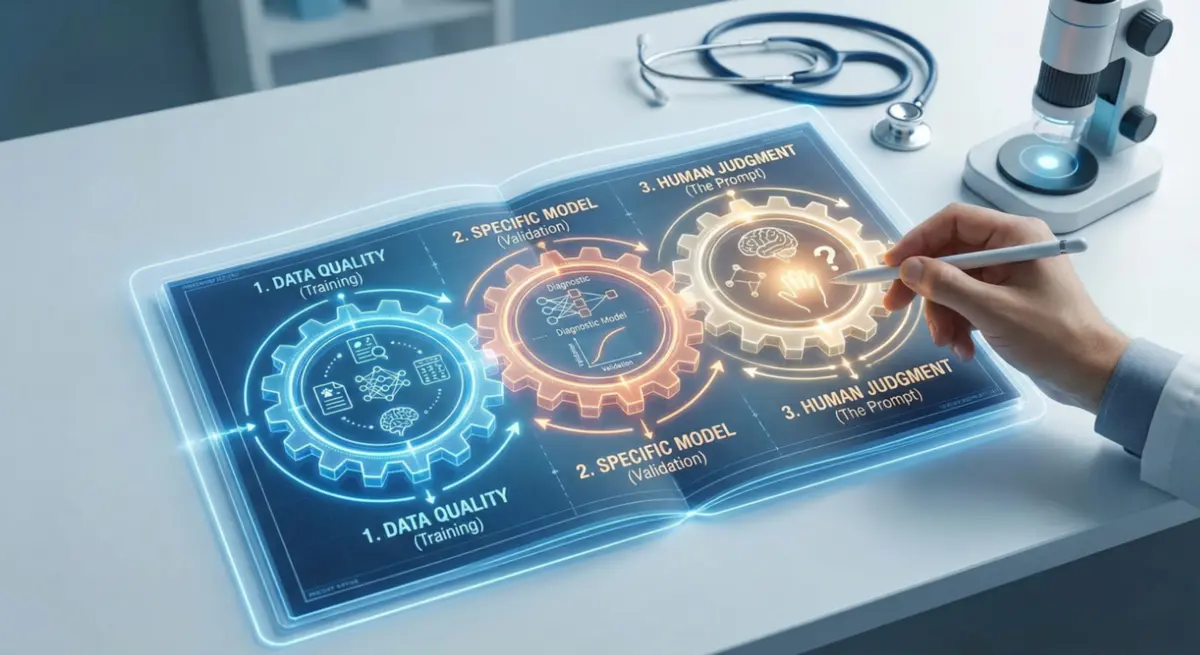

Now, how do we know if a specific AI tool is actually going to help us make better decisions, or if it is just a pretty wrapper with little substance inside? Think of it like a cooking recipe. We need three fundamental ingredients, and if just one of them fails, the result will be poor no matter how good the other two are.

Ingredient 1: The training data

AI only knows what it saw during its training phase. If it learned from biased, incomplete, outdated, or non-validated data, its recommendations will be unreliable. It is that simple and that decisive.

This is why the volume and quality of the data matter enormously. An AI trained on 100 chest radiographs will not be as accurate or reliable as one trained on 100,000 radiographs carefully labeled and reviewed by certified veterinary radiologists. Quantity matters, but the quality of labeling and expert supervision matter even more.

When evaluating a tool, always ask: what data was it trained on? How many cases has it seen? Where do those data come from? Who supervised the labeling quality? Do the data include the diversity of breeds, species, and clinical presentations that you encounter in your daily practice?

Ingredient 2: The model and its specific training

Not all AIs are the same, just as not all microscopes serve the same purpose. A language model trained to generate text is not suited to interpreting radiographs, and a neural network trained to detect fractures is not suited to transcribing consultations.

The tool must be specifically designed for the task it is going to perform, with data from the veterinary domain — not generic human medicine data superficially adapted. This is where concepts come in that we, as professionals, must learn to demand: independent validation of results, transparency in performance metrics, publicly declared known limitations, and sensitivity and specificity ratios for each type of finding.

When a vendor presents you with a tool, never settle for a generic “this product uses advanced AI” or “our proprietary algorithm is state-of-the-art.” Ask for concrete, verifiable numbers. What is its sensitivity for the findings that matter most to you? What is its specificity? In which study was it validated? With how many cases and from what sources? Has it been evaluated by independent professionals? If they cannot or will not answer these questions, that is already a very eloquent answer in itself.

Ingredient 3: Us (The prompt and our judgment)

The third ingredient — and often the most undervalued — is ourselves. The quality of the question we ask the AI, what is technically called the prompt, and the context we provide are absolutely decisive for the quality of the response we get.

And after the prompt comes something equally important: our ability to critically evaluate the result the AI returns. AI does not have the last word. We do — always. And our training, our clinical experience, and our knowledge of the specific patient are what allow us to distinguish a useful and applicable response from a perfectly written but clinically absurd hallucination.

The golden rule you must never forget

Everything above leads us to a rule you should burn into your professional memory: garbage in, garbage out. It is a universal principle of computing that applies with special force to artificial intelligence.

An AI tool does not work miracles. Its power depends one hundred percent on two factors that are in our hands: the quality of the information we provide and the quality of the questions we ask.

Allow me to illustrate with a practical example you can try today. If you ask a language model “tell me about otitis,” you will get a generic, encyclopedia-style response. Perhaps useful as a basic general refresher, but without any real differential value for your daily clinical practice.

But if you say: “I am a veterinary technician. I need to explain to the owner of a 6-year-old cocker spaniel, using a simple analogy and without jargon, why it is essential to complete the full three weeks of otitis treatment even though the dog seems completely better after the first week. The owner is a very busy person who tends to abandon treatments when she sees improvement” — the response you get is radically different. It is specific, useful, empathetic, and directly applicable in your next interaction with that type of client.

The difference between both responses is not in the AI. It is in us. In how we frame the question, how much context we provide, what role we define for ourselves and for the AI, and what level of specificity we request in the response.

The professional skill of the future is not knowing all the answers. It is knowing how to ask the right questions to the tools that can multiply our impact.

This is called prompting, and it is a competency that all — absolutely all — veterinary professionals need to actively develop. It is not about learning to code or becoming an IT specialist. It is about learning to communicate effectively with a tool that can multiply our productivity and the quality of our work in ways that were unthinkable five years ago.

A framework for making informed decisions

With these three ingredients in mind, the next time someone presents you with a veterinary AI tool at a conference, during a sales visit, or in an online demo, you will have a clear, professional filter to evaluate it. Ask about the training data, demand transparent performance metrics, and test the tool yourself with real cases before committing.

This framework will protect you from both paralysis and impulse buying. It will give you the judgment to decide on solid ground rather than being carried away by the excitement of the moment or the fear of falling behind.

In the next article in this series, we will move from theory to full-on practice. We will open the augmented veterinarian’s toolkit and explore how automation AI can give you back up to two hours of administrative work every day — to devote to what truly matters.

This article is part of the “AI & Veterinary Medicine” series by KyberVet. If you found it useful, share it with your team. Transformation is a journey best traveled together.